I am trying to merge two netcdf files with harpmerge in python in two method. Each netcdf file size is 127 MB. It is important for me to clip the netcdf dataset based on my study area.

First method:

I merged these tow files with harp in python:

myfiles = ‘…/*.nc’

i = 0

file_names =

products = # array con los productos a juntarfor filename in os.listdir(os.path.dirname(os.path.abspath(myfiles))):

base_file, ext = os.path.splitext(filename)if ext == ".nc" and base_file.split('_')[0] == 'S5P': product_name = base_file + "_" + str(i) try: product_name = harp.import_product( base_file + ext, operations="latitude > 24.8 [degree_north]; latitude < 39.9 [degree_north];\ longitude > 43.8 [degree_east]; longitude < 63.4 [degree_east];\ bin_spatial(1511,24.8,0.01,1961,43.8,0.01); derive(longitude {longitude});\ derive(latitude {latitude})") print("Product " + base_file + ext + " imported") products.append(product_name) except: print ("Product not imported") i = i + 1

product_bin = harp.execute_operations(products, post_operations=“bin();squash(time, (latitude,longitude))”)

harp.export_product(product_bin, “merged.nc”)

Merged netcdf file created has 296 MB size, It is very large because when I want to use this method for many files, it will need huge capacity of hdd.

Second method:

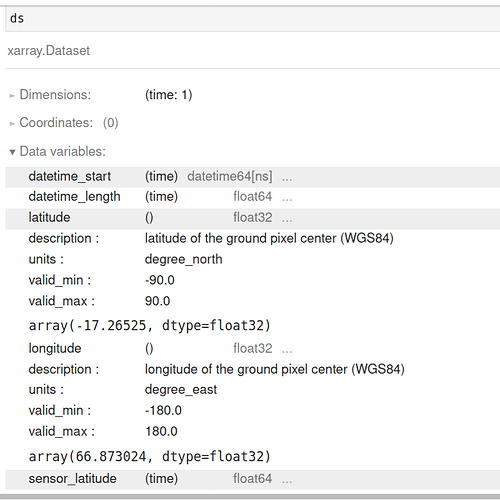

In this method I tried to use xarray capability, for do that first I clip each file based on my study area:

ds = xr.open_dataset(file, group = ‘PRODUCT’)

ds_ir = ds.where((44 < ds.longitude) & (ds.longitude < 65)

& (24 < ds.latitude) & (ds.latitude < 41), drop=True)src_fname, ext = os.path.splitext(file) # split filename and extension

save_fname = os.path.join(outpath, os.path.basename(src_fname)+‘.nc’)

ds_ir.to_netcdf(save_fname)

File size of each clipped netcdf file with xarray is 3.5 MB (it’s good size).

But when I want merge these two clipped netcdf files in first step of import product:

product_name = harp.import_product(‘…/clipped_nc_in xarray.nc’)

It return:

CLibraryError: /clipped_nc_in xarray.nc: unsupported product

What is my problem? Why netcdf file created with xarray does not supported with harp?